The project is to develop a physical display for a set of intelligent agents moving through a computed space. Virtual Environments are generally experienced through projections of the computed environment. Lacing is the making of a physical reality generated through the emergent behaviour of a set of intelligent agents.

The agents move across the white plane of the computer screen. The agents have a limited vision which means that they can 'see' a given territory before them. They are 'born' at a set of given point in the space after which they search the space for attractor points at which they 'die'. The agents know not to bump in to each other, and if another agent is in front of them they can follow each other through the space, towards their destination. More about agents.

There can be any number of agents in the space: here there are 16.

The agents are interface to a camera which is mapped onto the two-dimensional surface that they navigate. This means that the agents can 'see' objects in their space: black object show up as impenetrable boundaries that the agents must navigate around.

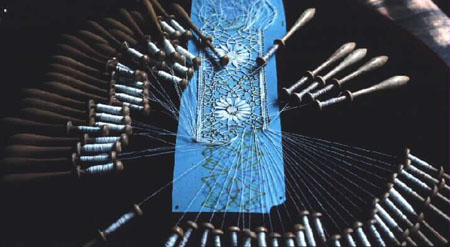

The idea for Lacing is to interface the agents with the physical environment of a lacing machine. The idea is to create a plane over which threads are pleated following the paths of the agents.

The knowledge that we have about the agents is the continuous position of each of the agents as they move across the plane. The data is as follows:

A 0 290.895782, 113.803864, -0.006673

A 1 457.739136, 134.849991, -3.042692

A 2 279.716003, 135.710648, 0.017804

A 3 442.869354, 137.079636, -3.083059

These are calibrated to the pixel size of the window and are updated at the processing speed of the computer (which means very very fast).

The aim is to build a model which will allow the pleating/lacing of a set of threads that are informed by the agents. As the agents change their course of movement (by means of the camera interface) they generate changes in the pleating/lacing of the thread.