SafeTRIP

[2009-Current]

I am the lead investigator at UCL and product owner on the large scale

EC co-funded FP7 R&D project SafeTRIP (overall budget €11

million).

In addition, I am responsible for the following:

• User Requirements

• Managing development of 2 services

(Info

Explorer and Driver Alertness)

• Managing the assessment

of trials (User, Commercial,

Safety,

Security & Environment aspects)

• Managing the development of a driving simulator for driver

attention/distration studies

• Dissemination activities

(publications, tutorial development and cross-fertilisation)

The SafeTRIP project has a consortium of 20 EU companies and

organisations, bringing

together Service operators, Road operators, Telecommunication providers

and other academic and technical partners. For more details see the

website

here.

The SafeTRIP

(Safellite

Applications For Emergency handling,

Traffic

alerts, Road safety and Incident Prevention) project's general

objective is to improve the use of road

and transport infrastructures and to improve the alert chain in case of

incidents. SAFETRIP benefits from a new satellite technology: the

S-Band that is optimised for 2-way communications for on-board vehicle

units interoperable with Galileo and UMTS systems.

| The SafeTRIP platform

architecture |

|

User Requirement capture |

|

|

|

|

|

|

| Defining SafeTRIP services |

|

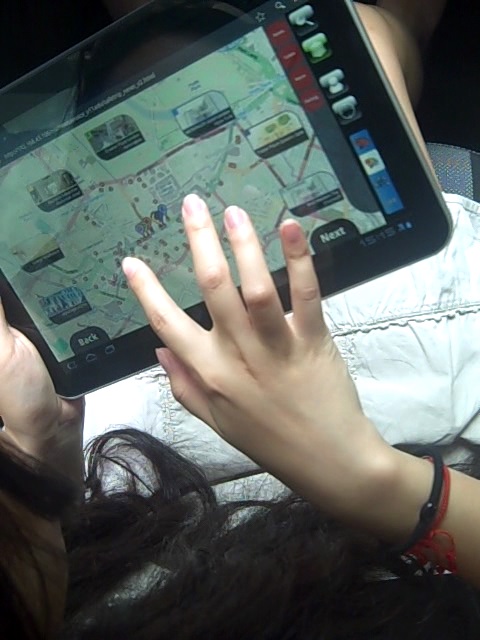

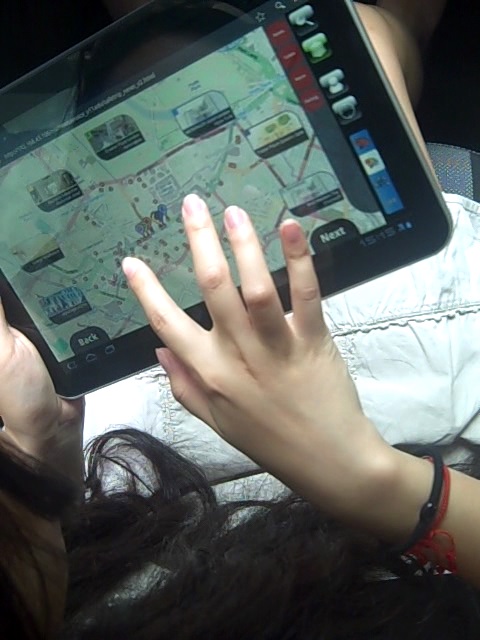

Info Explorer - Design,

Implementation and Testing |

|

|

|

|

|

|

| Driving Simulator |

|

Scientific Studies |

|

|

|

ARVA

[2008-2009]

The project centered around the

development of an Augmented Reality

(AR) system to visualise and interact with 3D CAD models in-situ -

using a tablet PC on construction sites.

Essential components of the system included

• Visualisation module for building plans in 3d on a mobile

device

• Tracking

module to augment the real world with planning

information in real time

• Annotation module to record multimedia information (video,

audio and images) and associate these with the plans

It was funded by the EPSRC

RAIS scheme to perform the ground work in collaboration with Laing

O'Rourke at the Cannon Street Station construction site.

SESAME

[2007]

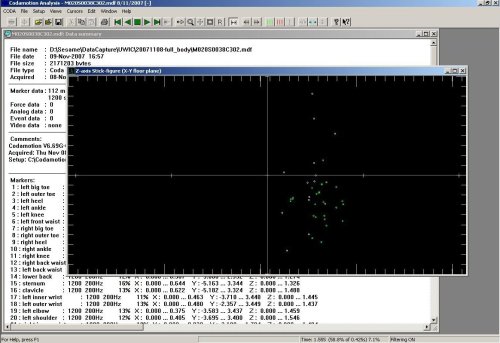

This

WINES project

involved several institutions -

I collaborated

with UWIC and the RVC in my work. My

research centered around the capture and production of customizable

computer animation for

athletics.

I used data

captured from olympic sprinters by various data capture techniques.

Challenges lie in the data capture and animation of fast moving objects

using minimally invasive techniques.

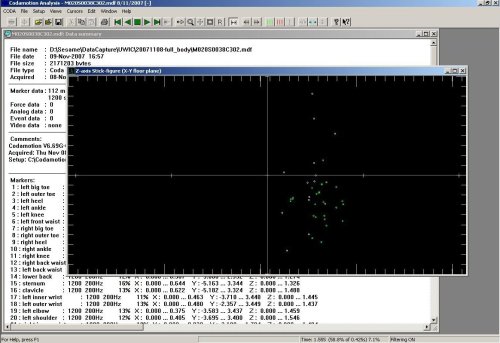

Below are some snapshot of the systems I have been obtaining capture

data from. My sesame page is

here

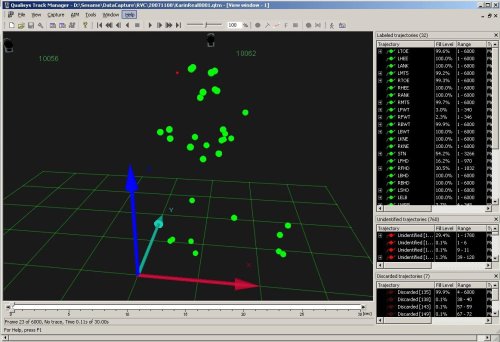

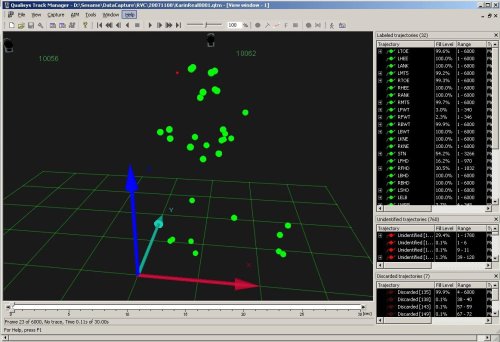

| Motion Capture Analysis

using Coda System |

Motion Capture Analysis

using Qualisys QTM |

|

|

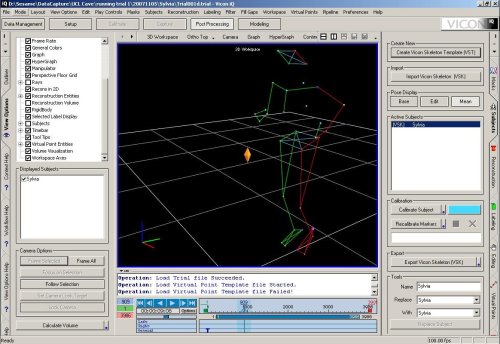

| Motion

Capture Analysis and Editing using Vicon IQ |

Character Animation

using 3D Studio Max |

|

|

Equator

IRC [2004-2007]

My research interests in this

project were mobile services,

user

interfaces, 3d visualisation and collaboration.

I led UCL's contribution to the equator project by exploring a new

field of augmented pedestrian navigation systems.

Project contributions:

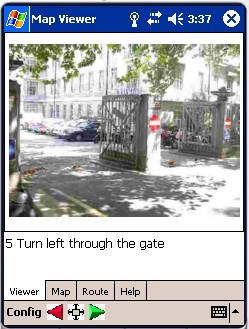

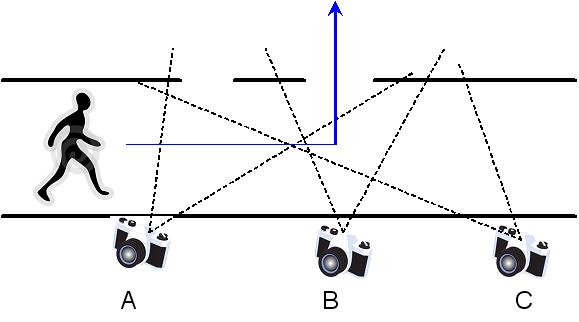

• Photo based

pedestrian

navigation system using non-landmark photographs

• Pioneering development of

Augmented photo-based pedestrian navigation

• Design of Geo-Tagging system

architecture used to develop application on PDA (used in Chawton House)

• Design and development of

mapping and navigation system on .NET platform

• Implementation of PDA based

system to interface with ECG monitoring device

• Adaptation of the george

square system to UCL porting to Linux and PDA

• Extended the George Square

system to apply

visibility processing to prioritize recommendations that are probably

visible to the user based on real geometry from maps.

• Design of content creator for

the GS system (emphasis on photo and visibility of buildings)

• C# .NET CF port (rather

reimplementation) of GSQ-pocket PC version

• Mapservices for on the fly map

generation from spatial databases (Oracle and postgis)

Long-Term

Habitat Ergonomics

for Mars Mission [2004]

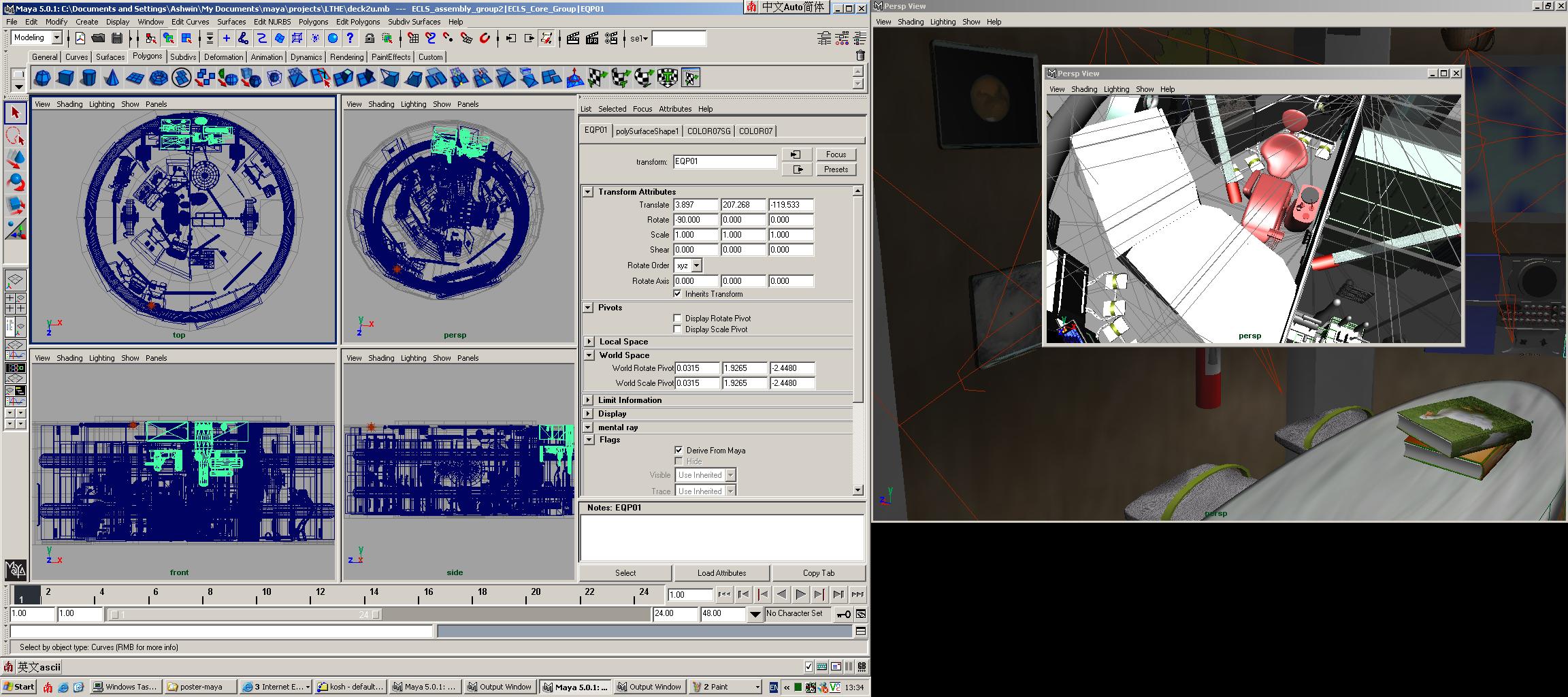

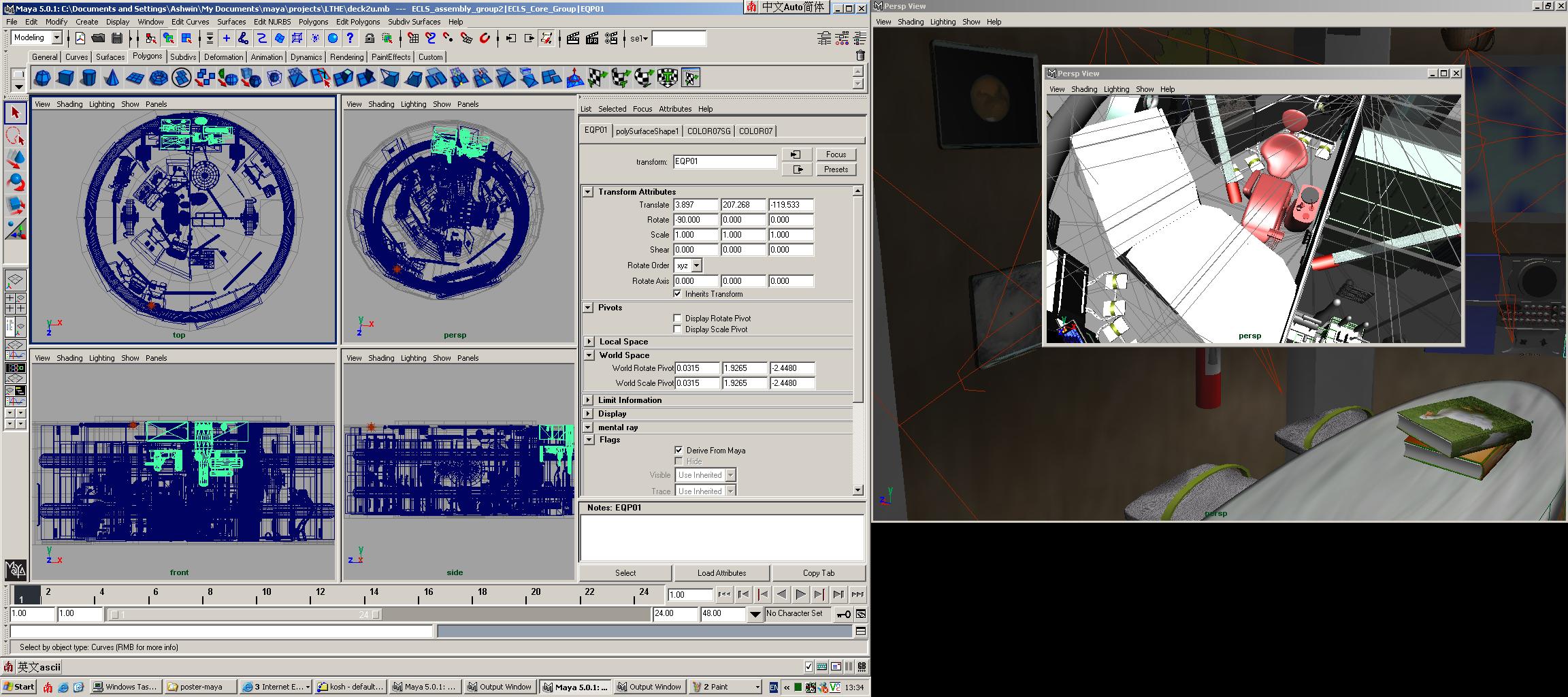

I worked on the 3d model used for the simulation of long-term habitat

during the Mars mission. The work was done in collaboration with

mission specialists, astronauts and simulation experts from ESA and

SAS (Belgium).

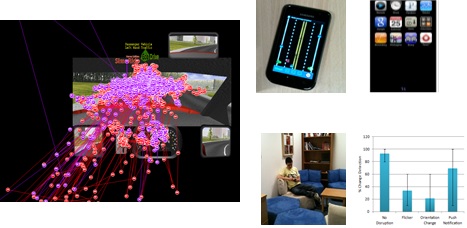

PhD

The work focusses on the

development of a novel and principled

solution for optimising the network usage of any arbitrary highly

animated and populated distributed virtual environment. This is

performed with the ultimate goal of reducing the effect of inherent

networking problems such as bandwidth limitation, congestion and lag,

on the user's perceptual experience of the virtual environment. The aim

is to prioritise

updates being dispatched by the server to the user's viewer, in a

client-server

architecture, such that the most perceptually significant updates are

sent as soon as possible, whilst the remaining updates are sent subject

to the level of network traffic.

The work investigates the

theories and findings in the field

of

visual attention from experimental psychology and, more recently, from

computer graphics and a few other related fields, with the main aim of

developing a computationally viable visual attention model applicable

to dynamic and interactive computer generated environments. For this

purpose, the features and intrinsic characteristics of what makes a

virtual environment different from other computer generated worlds and

animations are analysed with strong emphasis on the user's perspective.

Building on these findings, a visual attention model comprising of a

top-down and bottom-up component is devised, to represent the principal

mechanisms which leads to selection of regions of a scene for further

scrutiny and also which contributes to the user's overall sense of

perceptually plausible environment.

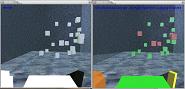

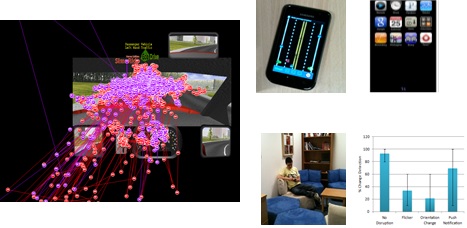

A set of experiments are

carried out to investigate some of

the most

significant observations about visual attention within the VR context,

with the aim to tune and improve the attention model. These include a

number of low-level psychophysical studies (to evaluate change

detection and inattention blindness) and task-performance study. Most

important observations are that colour and orientation changes are

visually more salient and only a limited number of changes can be

noticed simultaneously, if at all.An efficient implementation of

this model, which essentially

generates an attention map by combining feature-based maps and mental

maps, is evaluated through a set of experiments. Next, distributed VR

system architectures which use an implementation of the attention model

are conceived, weighting the impact on system resources, network and

user perception. The network usage and user's perception is evaluated

using experimental test-beds of animated and populated distrib uted

virtual environments.

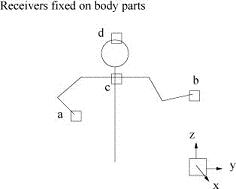

MSc

In this project, we developed

an approach to interactive

control of

avatars in virtual environments, using an artificial neural network.

The network captures the essential characteristics of real human

motion, and is subsequently employed to create credible body postures

from a minimal set of sensor inputs.

The technique offers an

alternative to inverse kinematics for

human

figure control. A

generalised network, trained by a population of users, can be used

successfully for individuals by

scaling the avatar’s body to match each real

human’s body dimensions.

It is demonstrated that the

network can predict arm positions to an accuracy better than the errors

which human subjects can

detect.